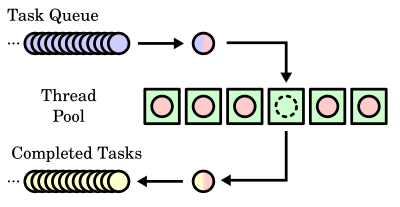

Thread pool

In computer programming, a thread pool is a software design pattern for achieving concurrency of execution in a computer program. Often also called a replicated workers or worker-crew model,[1] a thread pool maintains multiple threads waiting for tasks to be allocated for concurrent execution by the supervising program. By maintaining a pool of threads, the model increases performance and avoids latency in execution due to frequent creation and destruction of threads for short-lived tasks.[2] Another good property - the ability to limit system load, when we use fewer threads than available. The number of available threads is tuned to the computing resources available to the program, such as a parallel task queue after completion of execution.

Performance

[edit]The size of a thread pool is the number of threads kept in reserve for executing tasks. It is usually a tunable parameter of the application, adjusted to optimize program performance.[3] Deciding the optimal thread pool size is crucial to optimize performance.

One benefit of a thread pool over creating a new thread for each task is that thread creation and destruction overhead is restricted to the initial creation of the pool, which may result in better performance and better system stability. Creating and destroying a thread and its associated resources can be an expensive process in terms of time. An excessive number of threads in reserve, however, wastes memory, and context-switching between the runnable threads invokes performance penalties. A socket connection to another network host, which might take many CPU cycles to drop and re-establish, can be maintained more efficiently by associating it with a thread that lives over the course of more than one network transaction.

Using a thread pool may be useful even putting aside thread startup time. There are implementations of thread pools that make it trivial to queue up work, control concurrency and sync threads at a higher level than can be done easily when manually managing threads.[4][5] In these cases the performance benefits of use may be secondary.

Typically, a thread pool executes on a single computer. However, thread pools are conceptually related to server farms in which a master process, which might be a thread pool itself, distributes tasks to worker processes on different computers, in order to increase the overall throughput. Embarrassingly parallel problems are highly amenable to this approach.[citation needed]

The number of threads may be dynamically adjusted during the lifetime of an application based on the number of waiting tasks. For example, a web server can add threads if numerous web page requests come in and can remove threads when those requests taper down.[disputed – discuss] The cost of having a larger thread pool is increased resource usage. The algorithm used to determine when to create or destroy threads affects the overall performance:

- Creating too many threads wastes resources and costs time creating the unused threads.

- Destroying too many threads requires more time later when creating them again.

- Creating threads too slowly might result in poor client performance (long wait times).

- Destroying threads too slowly may starve other processes of resources.

In languages

[edit]

In bash implemented by --max-procs / -P in xargs, for example:

# Fetch 5 URLs in parallel

urls=(

"https://example.com/file1.txt"

"https://example.com/file2.txt"

"https://example.com/file3.txt"

"https://example.com/file4.txt"

"https://example.com/file5.txt"

)

printf '%s\n' "${urls[@]}" | xargs -P 5 -I {} curl -sI {} | grep -i "content-length:"

[6][7][8] In Go, called worker pool:

package main

import (

"fmt"

"time"

)

func worker(id int, jobs <-chan int, results chan<- int) {

for j := range jobs {

fmt.Println("worker", id, "started job", j)

time.Sleep(time.Second)

fmt.Println("worker", id, "finished job", j)

results <- j * 2

}

}

func main() {

const numJobs = 5

jobs := make(chan int, numJobs)

results := make(chan int, numJobs)

for w := 1; w <= 3; w++ {

go worker(w, jobs, results)

}

for j := 1; j <= numJobs; j++ {

jobs <- j

}

close(jobs)

for a := 1; a <= numJobs; a++ {

<-results

}

}

It will print:

$ time go run worker-pools.go

worker 1 started job 1

worker 2 started job 2

worker 3 started job 3

worker 1 finished job 1

worker 1 started job 4

worker 2 finished job 2

worker 2 started job 5

worker 3 finished job 3

worker 1 finished job 4

worker 2 finished job 5

real 0m2.358s

See also

[edit]- Asynchrony (computer programming)

- Object pool pattern

- Concurrency pattern

- Grand Central Dispatch

- Parallel Extensions

- Parallelization

- Server farm

- Staged event-driven architecture

References

[edit]- ^ Garg, Rajat P. & Sharapov, Ilya Techniques for Optimizing Applications - High Performance Computing Prentice-Hall 2002, p. 394

- ^ Holub, Allen (2000). Taming Java Threads. Apress. p. 209.

- ^ Yibei Ling; Tracy Mullen; Xiaola Lin (April 2000). "Analysis of optimal thread pool size". ACM SIGOPS Operating Systems Review. 34 (2): 42–55. doi:10.1145/346152.346320. S2CID 14048829.

- ^ "QThreadPool Class | Qt Core 5.13.1".

- ^ "GitHub - vit-vit/CTPL: Modern and efficient C++ Thread Pool Library". GitHub. 2019-09-24.

- ^ Shved, Paul (2010-01-07). "Easy parallelization with Bash in Linux". coldattic.info. Retrieved 2025-01-26.

- ^ "xargs(1) - Linux manual page". www.man7.org. Retrieved 2025-01-26.

- ^ "Controlling Parallelism (GNU Findutils 4.10.0)". www.gnu.org. Retrieved 2025-01-26.

- ^ "Go by Example: Worker Pools". gobyexample.com. Retrieved 2021-07-27.

- ^ "Effective Go - The Go Programming Language". golang.org. Retrieved 2021-07-27.

another approach that manages resources well is to start a fixed number of handle goroutines all reading from the request channel. The number of goroutines limits the number of simultaneous calls to process

{{cite web}}: CS1 maint: url-status (link) - ^ "The Case For A Go Worker Pool — brandur.org". brandur.org. Retrieved 2021-07-27.

Worker pools are a model in which a fixed number of m workers (implemented in Go with goroutines) work their way through n tasks in a work queue (implemented in Go with a channel). Work stays in a queue until a worker finishes up its current task and pulls a new one off.

{{cite web}}: CS1 maint: url-status (link)

External links

[edit]- "Query by Slice, Parallel Execute, and Join: A Thread Pool Pattern in Java" by Binildas C. A.

- "Thread pools and work queues" by Brian Goetz

- "A Method of Worker Thread Pooling" by Pradeep Kumar Sahu

- "Work Queue" by Uri Twig: C++ code demonstration of pooled threads executing a work queue.

- "Windows Thread Pooling and Execution Chaining"

- "Smart Thread Pool" by Ami Bar

- "Programming the Thread Pool in the .NET Framework" by David Carmona

- "Creating a Notifying Blocking Thread Pool in Java" by Amir Kirsh

- "Practical Threaded Programming with Python: Thread Pools and Queues" by Noah Gift

- "Optimizing Thread-Pool Strategies for Real-Time CORBA" by Irfan Pyarali, Marina Spivak, Douglas C. Schmidt and Ron Cytron

- "Deferred cancellation. A behavioral pattern" by Philipp Bachmann

- "A C++17 Thread Pool for High-Performance Scientific Computing" by Barak Shoshany